Lark ×(GPT-4 + DALL·E + Whisper)

🚀 Lark OpenAI 🚀

English · 简体中文· 繁體中文 · 日本語 · Tiếng Việt

🗣Voice Communication: Private Direct Says with Robots

💬Multi-topic dialogue: support private and group chat multi-topic discussion, efficient and coherent

🖼Text graph: supports text graph and graph search

🛖Scene preset: built-in rich scene list, one-click switch AI role

🎭Role play: Support scene mode, add fun and creative discussion

🤖AI mode: Built-in 4 AI modes, feel the wisdom and creativity of AI

🔄Context preservation: reply dialog to continue the same topic discussion

⏰Automatic end: timeout automatically end the dialogue, support to clear the discussion history

📝Rich text card: support rich text card reply, more colorful information

👍Interactive Feedback: Instant access to robot processing results

🎰Balance query: obtain token consumption in real time

🔙History Back to File: Easily Back to File History Dialogue and Continue Topic Discussion🚧

🔒Administrator mode: built-in administrator mode, use more secure and reliable🚧

🌐Multi-token load balancing: Optimizing high-frequency call scenarios at the production level

↩️ Support reverse proxy: provide faster and more stable access experience for users in different regions

📚Interact with Flying Book Documents: Become a Super Assistant for Enterprise Employees🚧

🎥Topic Content Seconds to PPT: Make Your Report Simpler from Now on🚧

📊Table Analysis: Easily import flying book tables to improve data analysis efficiency🚧

🍊Private data training: use the company's product information for GPT secondary training to better meet the individual needs of customers.🚧

- 🍏 The dialogue is based on OpenAI-GPT4 and Lark

- 🥒 support Serverless、local、Docker、binary package

Run On Replit

The fastest way to deploy the lark-openai to repl.it is to click the button below.

Remember switch to secrets tab then edit System environment variables.You can also edit raw json:

{

"BOT_NAME": "Lark-OpenAI",

"APP_ID": "",

"APP_SECRET": "",

"APP_ENCRYPT_KEY": "",

"APP_VERIFICATION_TOKEN": "",

"OPENAI_KEY": "sk-xx",

"OPENAI_MODEL": "gpt-3.5-turbo",

"APP_LANG": "en"

}Final callback addresses are

https://YOUR_ADDRESS.repl.co/webhook/event

https://YOUR_ADDRESS.repl.co/webhook/card

Local Development

git clone git@github.com:ConnectAI-E/lark-openai.git

cd Lark-OpenAI/codeIf your server does not have a public network IP, you can use a reverse proxy.

The server of Flying Book is very slow to access ngrok in China, so it is recommended to use some domestic reverse proxy service providers.

# Configure config.yaml

mv config.example.yaml config.yaml

// Testing deployment.

go run ./

cpolar http 9000

//Production deployment

nohup cpolar http 9000 -log=stdout &

//Check server status

https://dashboard.cpolar.com/status

// Take down the service

ps -ef | grep cpolar

kill -9 PIDServerless Development

git clone git@github.com:ConnectAI/lark-openai.git

cd Lark-OpenAI/codeinstall severlesstool

# Configure config.yaml

mv config.example.yaml config.yaml

# install severless cli

npm install @serverless-devs/s -gAfter the installation is complete, please deploy according to your local environment and the following tutorialseverless

- local

linux/mac osenv

- Modify the Deployment Region and Deployment Key in 's.yaml'

edition: 1.0.0

name: lark-openai

access: "aliyun" # Modify the custom key name.

vars: # Global variables

region: "cn-hongkong" # Modify the region where the cloud function wants to be deployed.

- One-click deployment

cd ..

s deploy- local

windows

- First open the local

cmdcommand prompt tool, rungo envto check the go environment variable settings on your computer, confirm the following variables and values

set GO111MODULE=on

set GOARCH=amd64

set GOOS=linux

set CGO_ENABLED=0If the value is incorrect, such as set GOOS=windows on your computer, please run the following command to set the GOOS variable value

go env -w GOOS=linux- Modify the deployment region and deployment key in

s.yaml

edition: 1.0.0

name: lark-openai

access: "aliyun" # Modify the custom key alias

vars: # Global variables

region: "ap-southeast-1" # Modify the desired deployment region for the cloud functions

- Modify

pre-deployins.yaml, remove the ring variable change part before the second steprun

pre-deploy:

- run: go mod tidy

path: ./code

- run: go build -o

target/main main.go # del GO111MODULE=on GOOS=linux GOARCH=amd64 CGO_ENABLED=0

path: ./code

- One-click deployment

cd ..

s deployRailway Deployment

Just configure environment variables on the platform. The process of deploying this project is as follows:

Click the button below to create a corresponding Railway project, which will automatically fork this project to your GitHub account.

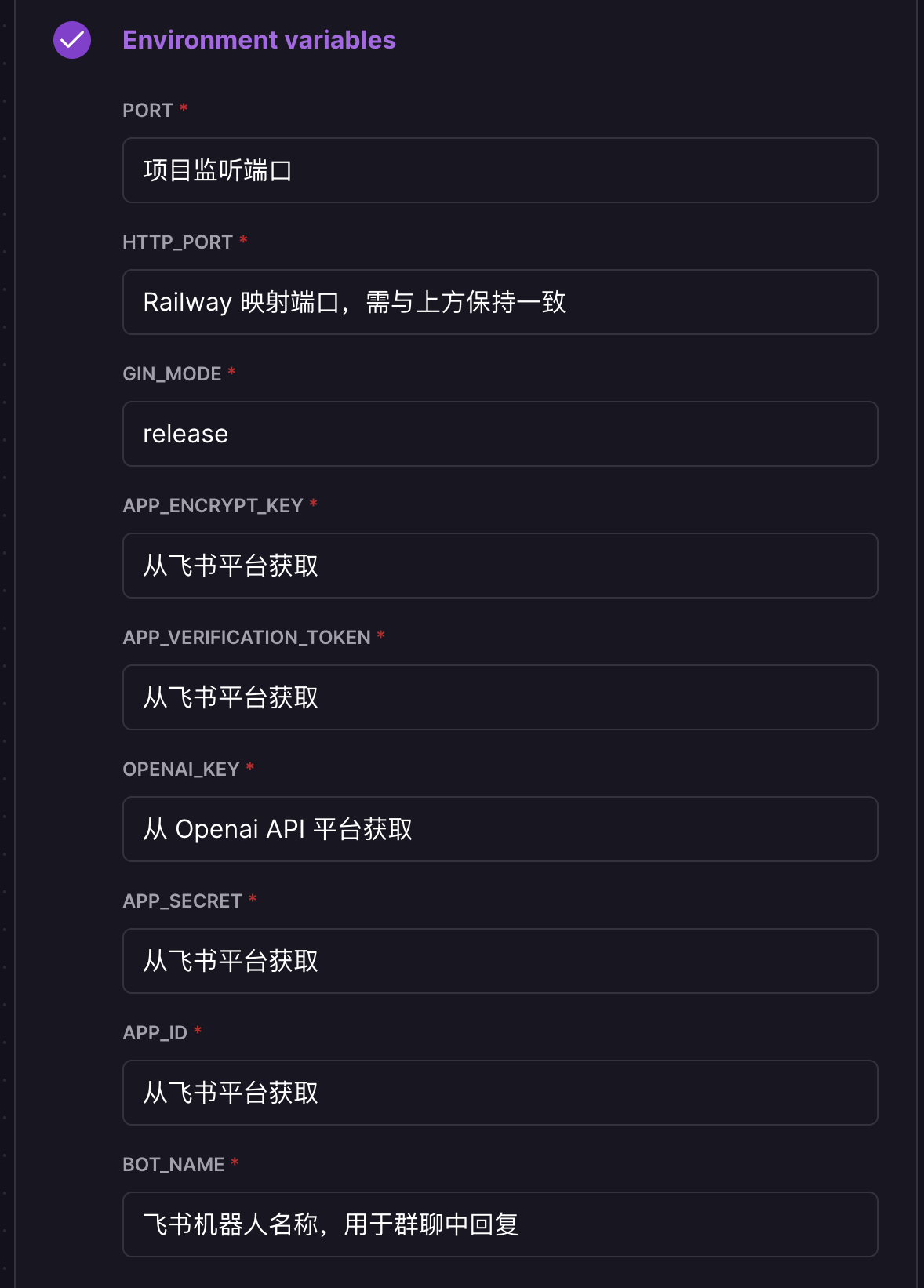

In the opened page, configure the environment variables. The description of each variable is shown in the figure below:

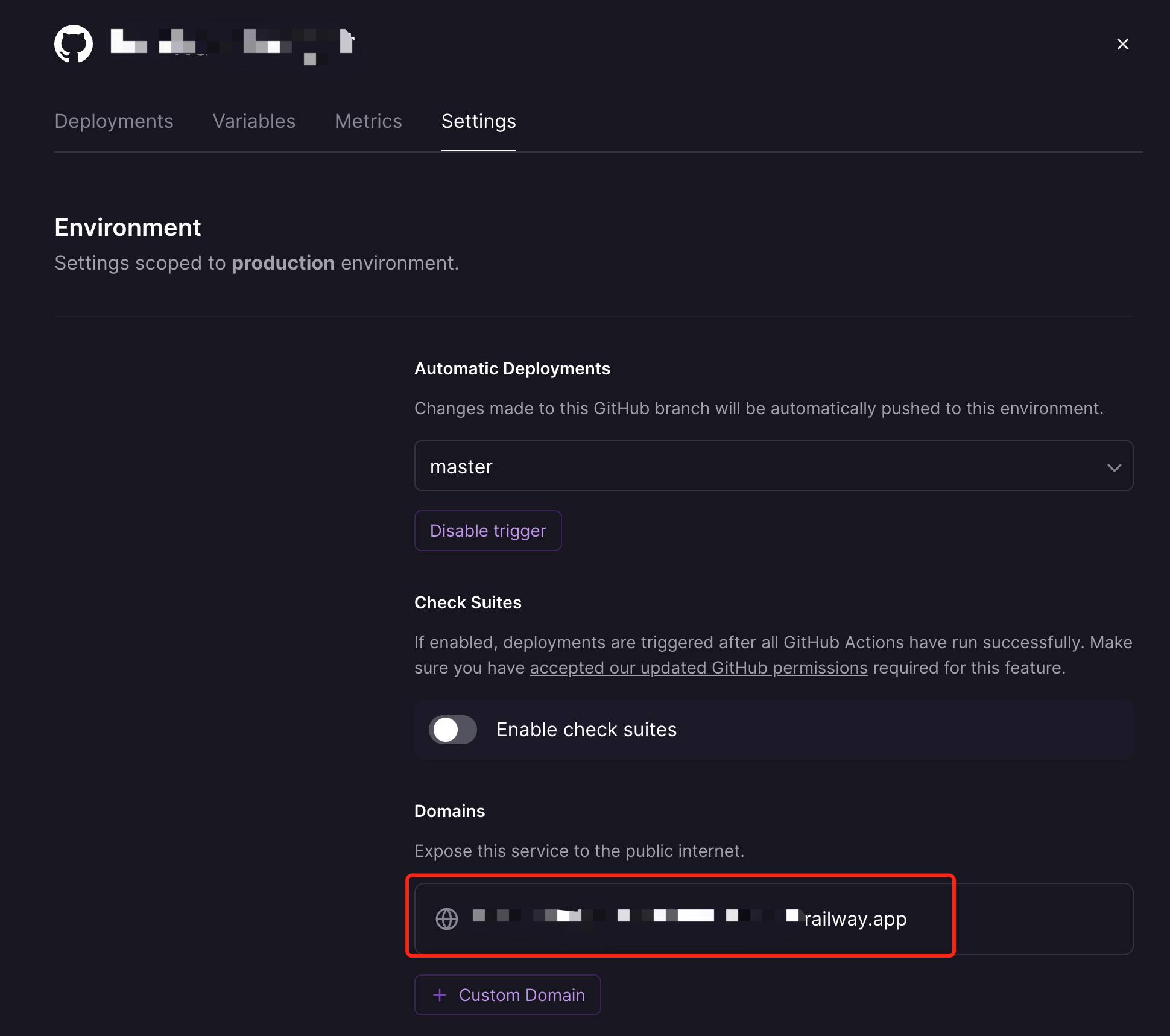

After filling in the environment variables, click Deploy to complete the deployment of the project. After the deployment is complete, you need to obtain the corresponding domain name for the Feishu robot to access, as shown in the following figure:

Uncertainty about success or failure of self-determination,can be passed through the above mentioned area name (https://xxxxxxxx.railway.app/ping)

,The result returned pong,The description department succeeded.。

Docker Development

docker build -t lark-openai:latest .

docker run -d --name lark-openai -p 9000:9000 \

--env APP_LANG=en \

--env APP_ID=xxx \

--env APP_SECRET=xxx \

--env APP_ENCRYPT_KEY=xxx \

--env APP_VERIFICATION_TOKEN=xxx \

--env BOT_NAME=chatGpt \

--env OPENAI_KEY="sk-xxx1,sk-xxx2,sk-xxx3" \

--env API_URL="https://api.openai.com" \

--env HTTP_PROXY="" \

feishu-chatgpt:latestAttention:

APP_LANGis the language of the Lark bot, for example,en,ja,vi,zh-hk.BOT_NAMEis the name of the Lark bot, for example,chatGpt.OPENAI_KEYis the OpenAI key. If you have multiple keys, separate them with commas, for example,sk-xxx1,sk-xxx2,sk-xxx3.HTTP_PROXYis the proxy address of the host machine, for example,http://host.docker.internal:7890. If you don't have a proxy, you can leave this unset.API_URLis the OpenAI API endpoint address, for example,https://api.openai.com. If you don't have a reverse proxy, you can leave this unset.

To deploy the Azure version

docker build -t lark-openai:latest .

docker run -d --name lark-openai -p 9000:9000 \

--env APP_LANG=en \

--env APP_ID=xxx \

--env APP_SECRET=xxx \

--env APP_ENCRYPT_KEY=xxx \

--env APP_VERIFICATION_TOKEN=xxx \

--env BOT_NAME=chatGpt \

--env AZURE_ON=true \

--env AZURE_API_VERSION=xxx \

--env AZURE_RESOURCE_NAME=xxx \

--env AZURE_DEPLOYMENT_NAME=xxx \

--env AZURE_OPENAI_TOKEN=xxx \

feishu-chatgpt:latestAttention:

APP_LANGis the language of the Lark bot, for example,en,ja,vi,zh-hk.BOT_NAMEis the name of the Lark bot, for example,chatGpt.AZURE_ONindicates whether to use Azure. Please set it totrue.AZURE_API_VERSIONis the Azure API version, for example,2023-03-15-preview.AZURE_RESOURCE_NAMEis the Azure resource name, similar tohttps://{AZURE_RESOURCE_NAME}.openai.azure.com.AZURE_DEPLOYMENT_NAMEis the Azure deployment name, similar tohttps://{AZURE_RESOURCE_NAME}.openai.azure.com/deployments/{AZURE_DEPLOYMENT_NAME}/chat/completions.AZURE_OPENAI_TOKENis the Azure OpenAI token.

Docker-Compose Development

Edit docker-compose.yaml, configure the corresponding environment variable through environment (or mount the corresponding configuration file through volumes), and then run the following command

# Build the image

docker compose build

# Start the service

docker compose up -d

# Stop the service

docker compose downEvent callback address: http://IP:9000/webhook/event

Card callback address: http://IP:9000/webhook/card

-

Get OpenAI KEY( 🙉 Below are free keys available for everyone to test deployment )

-

Create lark Bot

- Go Lark Open Platform creat app , get APPID and Secret

- Go

Features-Bot, creat bot - Obtain the public address from cpolar, serverless, or Railway, and fill it in the "Event Subscription" section of the Lark bot backend. For example,

http://xxxx.r6.cpolar.topis the public address exposed by cpolar./webhook/eventis the unified application route.- The final callback address is

http://xxxx.r6.cpolar.top/webhook/event.

- In the "Bot" section of the Lark bot backend, fill in the request URL for message cards. For example,

http://xxxx.r6.cpolar.topis the public address exposed by cpolar./webhook/cardis the unified application route.- The final request URL for message cards is

http://xxxx.r6.cpolar.top/webhook/card.

- In the "Event Subscription" section, search for the three terms: "Bot Join Group," "Receive Messages," and "Messages Read." Check all the permissions behind them.

Go to the permission management interface, search for "Image," and check "Get and upload image or file resources."

Finally, the following callback events will be added.

- im:resource(Read and upload images or other files)

- im:message

- im:message.group_at_msg(Read group chat messages mentioning the bot)

- im:message.group_at_msg:readonly(Obtain group messages mentioning the bot)

- im:message.p2p_msg(Read private messages sent to the bot)

- im:message.p2p_msg:readonly(Obtain private messages sent to the bot)

- im:message:send_as_bot(Send messages as an app)

- im:chat:readonly(Obtain group information)

- im:chat(Obtain and update group information)

- Publish the version and wait for the approval of the enterprise administrator

If you encounter problems, you can join the Lark group to communicate~

AI |

Application | |

|---|---|---|

| 🎒OpenAI | Go-OpenAI | 🏅Feishu-OpenAI, 🎖Lark-OpenAI, Feishu-EX-ChatGPT, 🎖Feishu-OpenAI-Stream-Chatbot, Feishu-TLDR,Feishu-OpenAI-Amazing, Feishu-Oral-Friend, Feishu-OpenAI-Base-Helper, Feishu-Vector-Knowledge-Management, Feishu-OpenAI-PDF-Helper, 🏅Dingtalk-OpenAI, Wework-OpenAI, WeWork-OpenAI-Node, llmplugin |

| 🤖 AutoGPT | ------ | 🏅AutoGPT-Next-Web |

| 🎭 Stablediffusion | ------ | 🎖Feishu-Stablediffusion |

| 🍎 Midjourney | Go-Midjourney | 🏅Feishu-Midjourney, 🔥MidJourney-Web, Dingtalk-Midjourney |

| 🍍 文心一言 | Go-Wenxin | Feishu-Wenxin, Dingtalk-Wenxin, Wework-Wenxin |

| 💸 Minimax | Go-Minimax | Feishu-Minimax, Dingtalk-Minimax, Wework-Minimax |

| ⛳️ CLAUDE | Go-Claude | Feishu-Claude, DingTalk-Claude, Wework-Claude |

| 🥁 PaLM | Go-PaLM | Feishu-PaLM,DingTalk-PaLM,Wework-PaLM |

| 🎡 Prompt | ------ | 📖 Prompt-Engineering-Tutior |

| 🍋 ChatGLM | ------ | Feishu-ChatGLM |

| ⛓ LangChain | ------ | 📖 LangChain-Tutior |

| 🪄 One-click | ------ | 🎖Awesome-One-Click-Deployment |