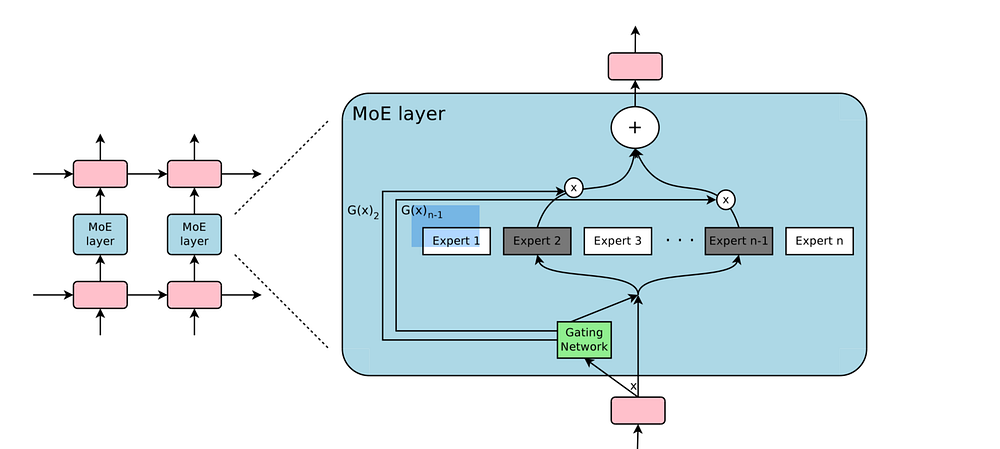

This repository contains the PyTorch re-implementation of the MoE layer described in the paper Outrageously Large Neural Networks for PyTorch.

This example was tested using torch v1.0.0 and Python v3.6.1 on CPU.

To install the requirements run:

pip install -r requirements.txt

The file test.py contains an example illustrating how to train and evaluate the MoE layer with dummy inputs and targets. To run the example:

python test.py

The code is based on the TensorFlow implementation that can be found here.